The new global study, in partnership with The Upwork Research Institute, interviewed 2,500 global C-suite executives, full-time employees and freelancers. Results show that the optimistic expectations about AI’s impact are not aligning with the reality faced by many employees. The study identifies a disconnect between the high expectations of managers and the actual experiences of employees using AI.

Despite 96% of C-suite executives expecting AI to boost productivity, the study reveals that, 77% of employees using AI say it has added to their workload and created challenges in achieving the expected productivity gains. Not only is AI increasing the workloads of full-time employees, it’s hampering productivity and contributing to employee burnout.

They tried implementing AI in a few our our systems and the results were always fucking useless. What we call “AI” can be helpful in some ways but I’d bet the vast majority of it is bullshit half-assed implementations so companies can claim they’re using “AI”

The one thing “AI” has improved in my life has been a banking app search function being slightly better.

Oh, and a porn game did okay with it as an art generator, but the creator was still strangely lazy about it. You’re telling me you can make infinite free pictures of big tittied goth girls and you only included a few?

Generating multiple pictures of the same character is actually pretty hard. For example, let’s say you’re making a visual novel with a bunch of anime girls. You spin up your generative AI, and it gives you a great picture of a girl with a good design in a neutral pose. We’ll call her Alice. Well, now you need a happy Alice, a sad Alice, a horny Alice, an Alice with her face covered with cum, a nude Alice, and a hyper breast expansion Alice. Getting the AI to recreate Alice, who does not exist in the training data, is going to be very difficult even once.

And all of this is multiplied ten times over if you want granular changes to a character. Let’s say you’re making a fat fetish game and Alice is supposed to gain weight as the player feeds her. Now you need everything I described, at 10 different weights. You’re going to need to be extremely specific with the AI and it’s probably going to produce dozens of incorrect pictures for every time it gets it right. Getting it right might just plain be impossible if the AI doesn’t understand the assignment well enough.

What were they trying to accomplish?

Looking like they were doing something with AI, no joke.

One example was “Freddy”, an AI for a ticketing system called Freshdesk: It would try to suggest other tickets it thought were related or helpful but they were, not one fucking time, related or helpful.

Ahh, those things - I’ve seen half a dozen platforms implement some version of that, and they’re always garbage. It’s such a weird choice, too, since we already have semi-useful recommendation systems that run on traditional algorithms.

It’s all about being able to say, “Look, we have AI!”

That’s pretty funny since manually searching some keywords can usually provide helpful data. Should be pretty straight-forward to automate even without LLM.

Yep, we already wrote out all the documentation for everything too so it’s doubly useless lol. It sucked at pulling relevant KB articles too even though there are fields for everything. A written script for it would have been trivial to make if they wanted to make something helpful, but they really just wanted to get on that AI hype train regardless of usefulness.

TFIDF and some light rules should work well and be significantly faster.

You mean the multi-billion dollar, souped-up autocorrect might not actually be able to replace the human workforce? I am shocked, shocked I say!

Do you think Sam Altman might have… gasp lied to his investors about its capabilities?

Nooooo. I mean, we have about 80 years of history into AI research and the field is just full of overhyped promised that this particularly tech is the holy grail of AI to end in disappointment each time, but this time will be different! /s

The article doesn’t mention OpenAI, GPT, or Altman.

Yeah, OpenAI, ChatGPT, and Sam Altman have no relevance to

AILLMs. No idea what I was thinking.I prefer Claude, usually, but the article also does not mention LLMs. I use generative audio, image generation, and video generation at work as often if not more than text generators.

Good point, but LLMs are both ubiquitous and the public face of “AI.” I think it’s fair to assign them a decent share of the blame for overpromising and underdelivering.

Aha, so this must all be Elon’s fault! And Microsoft!

There are lots of whipping boys these days that one can leap to criticize and get free upvotes.

get free upvotes.

Versus those paid ones.

If someone wants to pay me to upvote them I’m open to negotiation.

I traded in my upvotes when I deleted my reddit account, and all I got was this stupid chip on my shoulder.

I have the opposite problem. Gen A.I. has tripled my productivity, but the C-suite here is barely catching up to 2005.

Have you tripled your billing/salary? Stop being a scab lol

The opposite, actually, I requested a reduction in hours and pay to work on my own AI stuff. The company’s going under, anyway.

Cool too

What do you do, just out of interest?

Soup to nuts video production.

Same, I’ve automated alot of my tasks with AI. No way 77% is “hampered” by it.

deleted by creator

I dunno, mishandling of AI can be worse than avoiding it entirely. There’s a middle manager here that runs everything her direct-report copywriter sends through ChatGPT, then sends the response back as a revision. She doesn’t add any context to the prompt, say who the audience is, or use the custom GPT that I made and shared. That copywriter is definitely hampered, but it’s not by AI, really, just run-of-the-mill manager PEBKAC.

I’m infuriated on their behalf.

E-fucking-xactly. I hate reading long winded bullshit AI stories with a passion. Drivel all of it.

What have you actually replaced/automated with AI?

Voiceover recording, noise reduction, rotoscoping, motion tracking, matte painting, transcription - and there’s a clear path forward to automate rough cuts and integrate all that with digital asset management. I used to do all of those things manually/practically.

e: I imagine the downvotes coming from the same people that 20 years ago told me digital video would never match the artistry of film.

imagine the downvotes coming from the same people that 20 years ago told me digital video would never match the artistry of film.

They’re right IMO. Practical effects still look and age better than (IMO very obvious) digital effects. Oh and digital deaging IMO looks like crap.

But, this will always remain an opinion battle anyway, because quantifying “artistry” is in and of itself a fool’s errand.

Digital video, not digital effects - I mean the guys I went to film school with that refused to touch digital videography.

This may come as a shock to you, but the vast majority of the world does not work in tech.

A lot of people are keen to hear that AI is bad, though, so the clicks go through on articles like this anyway.

The study identifies a disconnect between the high expectations of managers and the actual experiences of employees using AI.

The trick is to be the one scamming your management with AI.

“The model is still training…”

“We will solve this <unsolvable problem> with Machine Learning”

“The performance is great on my machine but we still need to optimize it for mobile devices”

Ever since my fortune 200 employer did a push for AI, I haven’t worked a day in a week.

AI is stupidly used a lot but this seems odd. For me GitHub copilot has sped up writing code. Hard to say how much but it definitely saves me seconds several times per day. It certainly hasn’t made my workload more…

Probably because the vast majority of the workforce does not work in tech but has had these clunky, failure-prone tools foisted on them by tech. Companies are inserting AI into everything, so what used to be a problem that could be solved in 5 steps now takes 6 steps, with the new step being “figure out how to bypass the AI to get to the actual human who can fix my problem”.

I’ve thought for a long time that there are a ton of legitimate business problems out there that could be solved with software. Not with AI. AI isn’t necessary, or even helpful, in most of these situations. The problem is that creatibg meaningful solutions requires the people who write the checks to actually understand some of these problems. I can count on one hand the number of business executives that I’ve met who were actually capable of that.

I’ll say that so far I’ve been pretty unimpressed by Codeium.

At the very most it has given me a few minutes total of value in the last 4 months.

Ive gotten some benefit from various generic chat LLMs like ChatGPT but most of that has been somewhat improved versions of the kind of info I was getting from Stackexchange threads and the like.

There’s been some mild value in some cases but so far nothing earth shattering or worth a bunch of money.

I have never heard of Codeium but it says it’s free, which may explain why it sucks. Copilot is excellent. Completely life changing, no. That’s not the goal. The goal is to reduce the manual writing of predictable and boring lines of code and it succeeds at that.

I presume it depends on the area you would be working with and what technologies you are working with. I assume it does better for some popular things that tend to be very verbose and tedious.

My experience including with a copilot trial has been like yours, a bit underwhelming. But I assume others must be getting benefit.

They’ve got a guy at work whose job title is basically AI Evangelist. This is terrifying in that it’s a financial tech firm handling twelve figures a year of business-- the last place where people will put up with “plausible bullshit” in their products.

I grudgingly installed the Copilot plugin, but I’m not sure what it can do for me better than a snippet library.

I asked it to generate a test suite for a function, as a rudimentary exercise, so it was able to identify “yes, there are n return values, so write n test cases” and “You’re going to actually have to CALL the function under test”, but was unable to figure out how to build the object being fed in to trigger any of those cases; to do so would require grokking much of the code base. I didn’t need to burn half a barrel of oil for that.

I’d be hesitant to trust it with “summarize this obtuse spec document” when half the time said documents are self-contradictory or downright wrong. Again, plausible bullshit isn’t suitable.

Maybe the problem is that I’m too close to the specific problem. AI tooling might be better for open-ended or free-association “why not try glue on pizza” type discussions, but when you already know “send exactly 4-7-Q-unicorn emoji in this field or the transaction is converted from USD to KPW” having to coax the machine to come to that conclusion 100% of the time is harder than just doing it yourself.

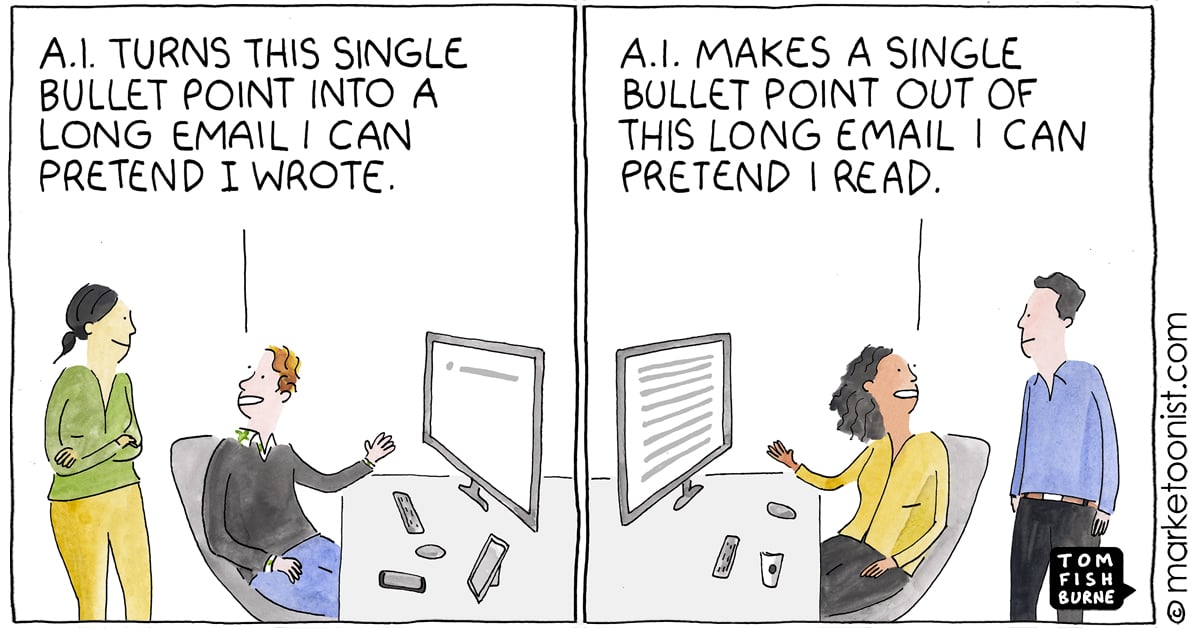

I can see the marketing and sales people love it, maybe customer service too, click one button and take one coherent “here’s why it’s broken” sentence and turn it into 500 words of flowery says-nothing prose, but I demand better from my machine overlords.

Tell me when Stable Diffusion figures out that “Carrying battleaxe” doesn’t mean “katana randomly jutting out from forearms”, maybe at that point AI will be good enough for code.

This is an upwork press release. Typical forbes.

Large “language” models decreased my workload for translation. There’s a catch though: I choose when to use it, instead of being required to use it even when it doesn’t make sense and/or where I know that the output will be shitty.

And, if my guess is correct, those 77% are caused by overexcited decision takers in corporations trying to shove AI down every single step of the production.

The link to the study is just a “Paid Search Ad” page. Ouch for the professionalism of Forbes.

That was gone years ago. They’ve been a blog hosting site for quite a while.

But But But

It’s made my job so much simpler! Obviously it can’t do your whole job and you should never expect it to, but for simple tasks like generating a simple script or setting up an array it BLAH BLAH BLAH, get fucked AI Techbros lmao

The workload that’s starting now, is spotting bad code written by colleagues using AI, and persuading them to re-write it.

“But it works!”

‘It pulls in 15 libraries, 2 of which you need to manually install beforehand, to achieve something you can do in 5 lines using this default library’

I was trying to find out how to get human readable timestamps from my shell history. They gave me this crazy script. It worked but it was super slow. Later I learned you could do history -i.

Turns out, a lot of the problems in nixland were solved 3 decades ago with a single flag of built-in utilities.

Wow shockingly employing a virtual dumbass who is confidently wrong all the time doesn’t help people finish their tasks.

It’s like employing a perpetually high idiot, but more productive while also being less useful. Instead of slow medicine you get fast garbage!

The study identifies a disconnect between the high expectations of managers and the actual experiences of employees

Did we really need a study for that?

Me: no way, AI is very helpful, and if it isn’t then don’t use it

created challenges in achieving the expected productivity gains

achieving the expected productivity gains

Me: oh, that explains the issue.

It’s hilarious to watch it used well and then human nature just kick in

We started using some “smart tools” for scheduling manufacturing and it’s honestly been really really great and highlighted some shortcomings that we could easily attack and get easy high reward/low risk CAPAs out of.

Company decided to continue using the scheduling setup but not invest in a single opportunity we discovered which includes simple people processes. Took exactly 0 wins. Fuckin amazing.

You all are nuts for not seeing this article for what it is

Which is?

A hit-piece commissioned by the Joker to distract you from his upcoming bank heist!!!

Replace joker for media and replace distract you from bank heist with convince you to hate AI then yes.

Do convince us why we should like something which is a massive ecological disaster in terms of fresh water and energy usage.

Feel free to do it while denying climate change is a problem if you wish.

I wrote this and feed it through chatGPT to help make it more readable. To me that’s pretty awesome. If I wanted I can have it written like an Elton John song. If that doesn’t convince you it’s fun and worth it then maybe the argument below could, or not. Either way I like it.

I don’t think I’ll convince you, but there are a lot of arguments to make here.

I heard a large AI model is equivalent to the emissions from five cars over its lifetime. And yes, the water usage is significant—something like 15 billion gallons a year just for a Microsoft data center. But that’s not just for AI; data centers are something we use even if we never touch AI. So, absent of AI, it’s not like we’re up in arms about the waste and usage from other technologies. AI is being singled out—it’s the star of the show right now.

But here’s why I think we should embrace it: the potential. I’m an optimist and I love technology. AI bridges gaps in so many areas, making things that were previously difficult much easier for many people. It can be an equalizer in various fields.

The potential with AI is fascinating to me. It could bring significant improvements in many sectors. Think about analyzing and optimizing power grids, making medical advances, improving economic forecasting, and creating jobs. It can reduce mundane tasks through personalized AI, like helping doctors take notes and process paperwork, freeing them up to see more patients.

Sure, it consumes energy and has costs, but its potential is huge. It’s here and advancing. If we keep letting the media convince us to hate it, this technology will end up hoarded by elites and possibly even made illegal for the rest of us. Imagine having a pocket advisor for anything—mechanical issues, legal questions, gardening problems, medical concerns. We’re not there yet, but remember, the first cell phones were the size of a brick. The potential is enormous, and considering all the things we waste energy and resources on, this one is weighed against it benefits.

Not being able to use your own words to explain something to me and having the thing that is an ecological disaster that also lies all the time explain it to me instead really only reinforces my point that there’s no reason to like this technology.

It is my own words. Wrote out the whole thing but I was never good with grammar and fully admit that often what I write is confusing or ambiguous. I can leverage chatgpt same way I would leverage spell check in word. I don’t see any problems there.

But if you don’t mind, I’m interested in the points discussed.